Adding HDD to Virtual Machine.

Steps to create

virtio HDD.- First Create a

imageusingqemu-imgcommand. - Add

imageto VM as avirtioHDD. - This will appear as

/dev/vdain the VM, format it using themke2fscommand. - Add to

/etc/fstab, so that the device can be mounted on boot up.

Details below.

First Create a image using qemu-img command.

Below is the command to create

image.[ahmed@ahmed-server ~]# qemu-img create VM-1-NEW-HDD.img 100G

This will create a HDD with 100GB disk.

Add image to VM as a virtio HDD.

Method 1 - Adding image from virt-manager UI.

Steps to Adding from the UI.

- Select a VM,

right-click->open. - Next on the screen select

view->details. - In the new window select

Add Hardware->Storage->select managed or other existing storage.- Select

Device Type:virtio - Select

Storage Format:raw

- Select

After Adding the HDD we can see it as below.

More Info Here : http://unix.stackexchange.com/questions/92967/how-to-add-extra-disks-on-kvm-based-vm

Method 2 - Adding image using virsh command.

To edit the VM configuration use below command.

[ahmed@ahmed-server ~]# virsh edit VM-1

Format

virsh edit

To add the image to the server add the below xml tag.

<disk type='file' device='disk'>

<driver name='qemu' type='raw' cache='none'/>

<source file='/virtual_machines/images/VM-1-ADD.img'/>

<target dev='vda' bus='virtio'/>

<address type='pci' domain='0x0000' bus='0x00' slot='0x06' function='0x0'/>

</disk>

Now reboot the VM, after restart you will see the new device.

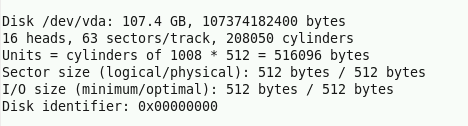

[ahmed@ahmed-server ~]# fdisk -l

Added image will appear as /dev/vda in the VM, format it using the mke2fs command.

Before we mount the device we need to format the device.

[ahmed@ahmed-server ~]# mke2fs -j /dev/vda

This will format the device.

Add to /etc/fstab, so that the device can be mounted on boot up.

# /etc/fstab

#

# Column Details here : http://man7.org/linux/man-pages/man5/fstab.5.html

# ------------------------------------------------------------------

/dev/vda /data ext3 defaults 0 0

Now we can check by mounting.

[ahmed@ahmed-server ~]# mount -a

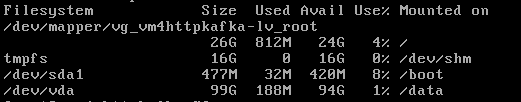

Check by running below command.

[ahmed@ahmed-server ~]# df -h

Useful Links.

http://xmodulo.com/install-configure-kvm-centos.html

http://www.cyberciti.biz/faq/kvm-virtualization-in-redhat-centos-scientific-linux-6/

https://www.howtoforge.com/vnc-server-installation-centos-6.5

http://wiki.centos.org/HowTos/KVM

https://www.howtoforge.com/virtualization-with-kvm-on-a-centos-6.4-server-p4

https://access.redhat.com/documentation/en-US/Red_Hat_Enterprise_Linux/5/html/Virtualization/sect-Virtualization-Virtualized_block_devices-Adding_storage_devices_to_guests.html

http://unix.stackexchange.com/questions/92967/how-to-add-extra-disks-on-kvm-based-vm

http://www.techotopia.com/index.php/Adding_a_New_Disk_Drive_to_a_CentOS_6_System

http://blog.zwiegnet.com/linux-server/add-new-hard-drive-to-centos-linux/

http://man7.org/linux/man-pages/man5/fstab.5.html

Where can buy WD Red Hard Drive in UAE, WD Red 18TB Nas Hard Drive in UAE, Nas Hard Drive in UAE Here visit now https://pcdubai.com/product/wd-red-pro-18tb-nas-hdd-wd181kfgx/

ReplyDelete